hardware

Runyard Team

@runyard_dev

6 min read

Contents

Tags

#8gb-vram#rtx-3070#rtx-4060#local-llm#hardware

What LLMs Can You Run with 8GB VRAM?

8GB of VRAM is the most common GPU configuration in 2026. The good news: it's enough to run genuinely useful LLMs locally. The 7B-8B model class at 4-bit quantization is the sweet spot — fast, capable, and fits in 4-5GB leaving headroom for the KV cache.

Models That Fit in 8GB VRAM

- ▸Llama 3.1 8B (Q4_K_M) — ~5GB. Meta's flagship small model. Best general-purpose choice.

- ▸Mistral 7B v0.3 (Q4_K_M) — ~4.5GB. Fast, sharp instruction following.

- ▸Qwen 2.5 7B (Q4_K_M) — ~4.8GB. Top multilingual and coding performance at 7B.

- ▸DeepSeek Coder 6.7B (Q4_K_M) — ~4.2GB. Best coding model for 8GB cards.

- ▸Gemma 2 9B (Q4_K_M) — ~5.5GB. Google's best small model, strong reasoning.

- ▸Phi-3 Medium 14B (Q3) — ~7.5GB. Microsoft's "small but mighty" model at compressed quant.

- ▸LLaVA 1.6 7B — ~5GB. Vision + language, runs on 8GB with headroom.

What You Cannot Run on 8GB

- ▸Llama 3.1 8B at Q8/FP16 — needs 8-16GB, will hit your limit

- ▸Any 13B model at Q4+ — needs 8-10GB, risky on exactly 8GB

- ▸Mixtral 8x7B — needs 24GB+ even at Q2

- ▸Llama 3.1 70B — needs 40GB+ at Q4

Leave 1-2GB headroom for the KV cache. A 7B model at Q4 uses ~4.5GB of weights but needs another 1-2GB for context during inference. Plan for 6GB total, not 4.5GB.

Real-World Performance on 8GB Cards

- ▸RTX 4060 (8GB): Llama 3.1 8B Q4 → 60-70 tok/s

- ▸RTX 3070 (8GB): Llama 3.1 8B Q4 → 50-60 tok/s

- ▸RTX 3060 (8GB): Llama 3.1 8B Q4 → 40-50 tok/s

- ▸RX 6700 XT (8GB): Llama 3.1 8B Q4 → 35-45 tok/s (via ROCm)

Getting the Most Out of 8GB

- 1.Use Q4_K_M quantization — the best quality-to-size ratio for 7B models

- 2.Set a reasonable context length — 4K context uses far less KV cache than 32K

- 3.Close other GPU-heavy apps (games, video editing) before running inference

- 4.Use Ollama's --num-gpu flag to ensure all layers go on GPU, not CPU

- 5.Try Phi-3 Mini (3.8B) for pure speed — 100+ tok/s on 8GB cards

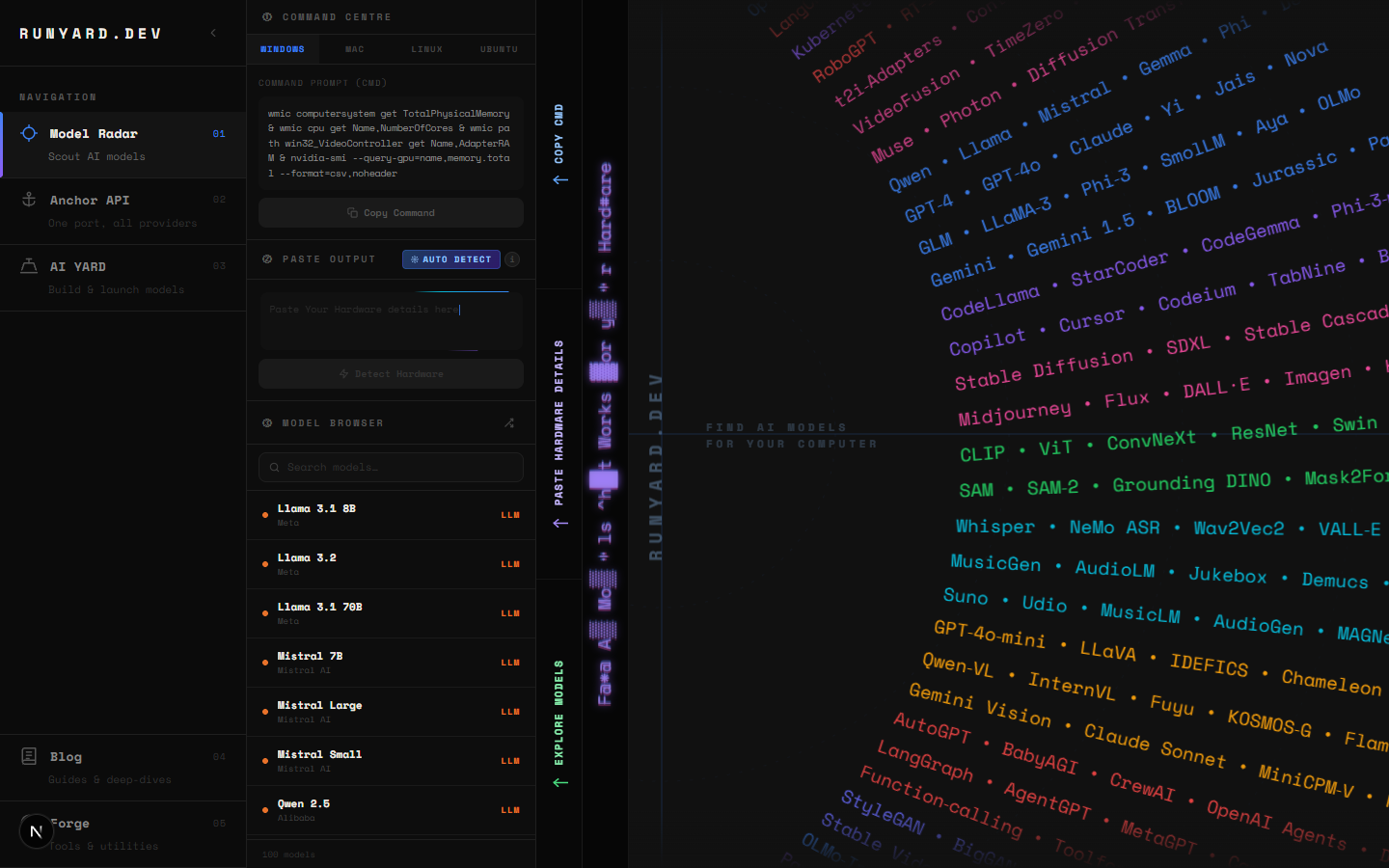

See Exactly What Fits on Your GPU

Stop guessing. Head to runyard.dev, enter your GPU details, and get an instant filtered list of every model that will run comfortably on 8GB VRAM — ranked by quality, speed, and use-case fit.

Tools

VRAM Calculator8 GB

2 GB96 GB

Llama 3.1 8B Q8

Chat8GB

CodeLlama 13B

Code8GB

Phi-3 Medium 14B

Chat7.5GB

Gemma 2 9B

Chat5.5GB

Llama 3.1 8B

Chat5GB

LLaVA 1.6 7B

Vision5GB

Qwen 2.5 7B

Chat4.8GB

Mistral 7B

Chat4.5GB

DeepSeek Coder 6.7B

Code4.2GB

Phi-3 Mini 3.8B

Chat2.5GB

Gemma 2 2B

Chat2GB

TinyLlama 1.1B

Chat1GB

12 models fit in 8GB

Newsletter