Contents

Tags

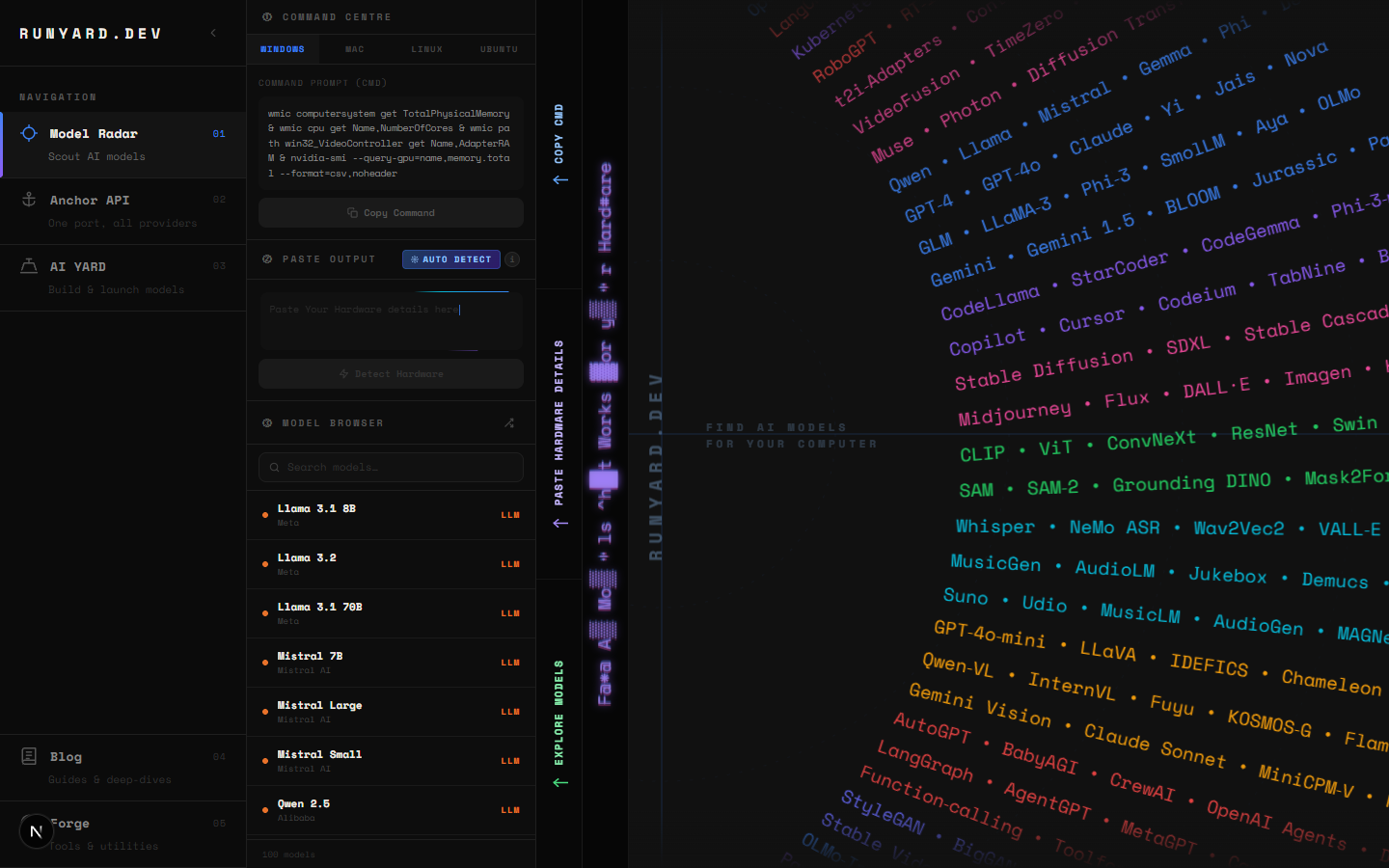

Best GPU for Running Local LLMs in 2026

GPU selection for local LLMs comes down to three variables: VRAM (determines which models fit), memory bandwidth (determines inference speed), and price. More VRAM is almost always better — but the relationship between bandwidth and tok/s is what most buyers miss.

The Rankings (Best to Value)

- 1.RTX 4090 24GB — Best single GPU. 82.6 GB/s bandwidth. ~85-95 tok/s on Llama 3.1 8B Q4.

- 2.RTX 4080 Super 16GB — 90% of 4090 performance, $400 cheaper. Strong value.

- 3.RTX 4070 Ti Super 16GB — Best value pick. 16GB VRAM, excellent bandwidth.

- 4.RX 7900 XTX 24GB — AMD's best. 24GB for less than RTX 4090. ROCm support improving.

- 5.RTX 3090 24GB — Used market bargain. Same VRAM as 4090, older but capable.

- 6.Apple M3 Max (64-96GB unified) — Best for 70B models. Unified memory is game-changing.

- 7.RTX 4060 Ti 16GB — Best budget 16GB option. Slower bandwidth but great VRAM.

- 8.RTX 4060 8GB — Entry-level. Handles all 7B models. Great starting point.

Why Memory Bandwidth Matters More Than You Think

LLM inference is memory-bandwidth-bound, not compute-bound. During the generation phase, the GPU loads model weights from VRAM on every single token. A GPU with higher bandwidth generates tokens faster even at the same VRAM capacity.

- ▸RTX 4090: 1,008 GB/s bandwidth → fastest consumer GPU for inference

- ▸RTX 4080: 736 GB/s bandwidth → 73% of 4090 speed on large models

- ▸RTX 4070 Ti Super: 672 GB/s bandwidth → excellent for 16GB

- ▸RTX 4060 Ti: 288 GB/s bandwidth → half the speed of 4080 despite similar VRAM

- ▸Apple M3 Max: 400 GB/s bandwidth → competitive with RTX 4080 for unified models

Tokens Per Second Benchmarks (Llama 3.1 8B Q4_K_M)

The RTX 4060 (8GB) actually outperforms the RTX 4060 Ti (16GB) per dollar for 7B models because its memory bandwidth is higher. If you're running 7B models exclusively, the 4060 is the smarter buy.

Apple Silicon: The Wildcard

Apple M-series chips use unified memory shared between CPU and GPU. An M3 Max with 64GB can load a 70B model in Q4 entirely into memory — something that requires dual RTX 4090s on a PC. The trade-off is lower raw bandwidth than a dedicated 4090, but for 70B models it's the most accessible option.

Budget Recommendations by Use Case

- ▸Under $300 — RTX 4060 8GB. Runs all 7B models fast. Perfect starter.

- ▸$400-600 — RTX 4070 8GB or used RTX 3080 10GB. More headroom.

- ▸$600-900 — RTX 4070 Ti Super 16GB. Best value overall.

- ▸$1,000-1,500 — RTX 4080 Super 16GB or used RTX 3090 24GB.

- ▸$1,800-2,000 — RTX 4090 24GB. The top pick if budget allows.

- ▸No ceiling — Apple M3 Ultra (192GB). Runs anything. Laptop-friendly.

Verify Before You Buy

Before committing to a GPU, check runyard.dev with the specs of the card you're considering. The Model Radar will show exactly which models fit, at what quantization, and what real-world tok/s to expect — so you know your purchase is right for your workload.

Tools

Newsletter